🏛️WE'RE BACK - Understanding the Fundamentals and Making Moves.

What I've been up to in the month of June. Key accomplishments, lessons, and finding direction for the future.

It’s been a little over a year since I’ve published my last newsletter.

And, a LOT has happened since then - starting my own company (everything about that here), working on + deploying projects with Actionable (the company I work for), learning calculus, and starting on a new journey to become a master in the deep learning field.

The last year for me wasn’t the greatest in terms of raw accomplishments. On the other hand, the learning - probably all that really matters in the final analysis - has been off the charts, both from a mindset view and a domain-specific one.

That was the theme for this month - learning, growing, and ruthlessly attacking problems to make progress in key areas of my life. Adhering to my principals to become the best version of myself possible.

Let’s get into it 🔥

🤖What I’ve been working on - AI Papers and Projects.

Last month, I realized that, despite my practical knowledge in deep learning, my theoretical understanding was lacking - and to truly innovate in any field, it’s imperative to know both.

So, I’ve resolved to publish one AI project a week, with each one being increasingly difficult and complicated than the last. By July 15th, my goal is to have 4-5 high standards projects published, with a full paper written on a larger project by August.

My ultimate goal with this? To get in touch with and work on an exciting DL problem with an expert in the field - working together with a true master.

So far I’m two weeks in with two projects, and they alone have drastically ameliorated my mathematical and theoretical understanding. The first one of these was Replicating Maxout Networks - implementing the Maxout activation from scratch in PyTorch and FastAI, and testing model performance in comparison to ReLU networks to determine if Maxout’s better function approximations translated to real-world benefit.

The results were quite interesting! Despite higher function-modelling capabilities, all variations of the Maxout network (with and without Dropout and biases) fell short by ~3% in terms of test accuracy against ReLU networks.

My hypothesis for this was that the excess parameters needed to train Maxout caused the model to be more overfitting prone - i.e. it would need more training time and samples to achieve the same performance, even if it the relationship it captured was more accurate.

That also explains why Maxout isn’t used as often in the real world. It might be more capable in theory, but training time and compute are key bottlenecks that also need to be taken into consideration - is the extra accuracy worth it for increased resource consumption?

All in all, Replicating Maxout Networks (full project here with a more detailed mathematical breakdown) catalyzed my understanding of activation functions, the math behind them, how they impact feature spaces, the importance of weight initialization, and FastAI/PyTorch intricacies - this was super fun and a fantastic learning experience.

The second project I delved into was replicating the Adam Optimizer from Scratch (check out the full project here). After spending 6+ hours reading the original paper and taking hundreds of notes, I felt comfortable enough + had a strong enough mathematical intuition to begin implementing the algorithm from ground zero in PyTorch.

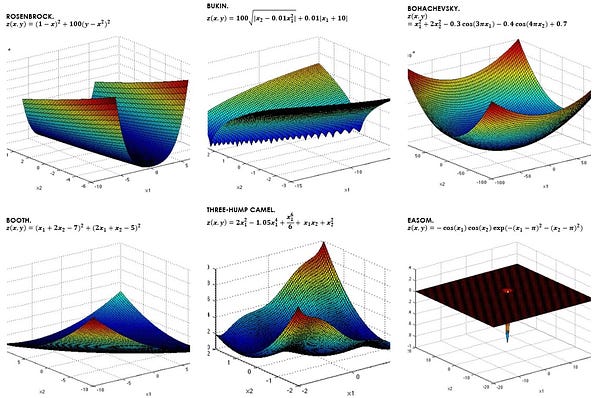

After building the Adam optimizer from the ground up, I then went ahead and tested it on the above 6 functions against other common optimization methods (SGD, RMSProp, and AdaGrad) to see if my implementation would hold up.

Surprisingly, it did rather well! While it generally took an additional 100-500 steps to converge, it was on average better at avoiding getting stuck in local minima. Interestingly, all the optimizers with the exception of AdaGrad failed at optimizing the last Easom function (the only non-convex function of the bunch).

I broke down all the math, the optimization algorithm, and thoroughly analyzed the results in the GitHub repo - so check that out if you want a high-quality and detailed description on what the project went into. My goal is to frame these projects much like experiments trying to answer an important question; so the repositories are almost like mini-reports.

After this project, I’m more interested than ever on the power of ensembles in DL. I’d already heard about them once in terms of models from this paper (highly recommend), so hearing about optimization ensemble-ing again from this project is particularly intriguing. I wonder what strides can be made by focusing on ensemble-based deep learning?

These are the questions I’ll be looking to answer over the next couple of weeks. Right now, I’m in search of the next project to replicate - something to keep expanding my comfort zone and catalyzing my understanding of the field.

Excited to see what comes next!

🛣️Staying on the Path + Goals.

Aside from my work in AI, I’m also trying to improve my understanding of data structures and algorithms + combinatorics. I’ve found that those fields in particular are in direct conjunction with the ability to think, and they’re incredibly useful skills to have on the day-to-day. Physics is a good one to include here as well!

I’m also thinking of taking up boxing/a physical sport as a means of disciplining the body and just getting outside more. In terms of disciplining the mind, I’ll be taking up daily walks and meditation as a kind of “reset” + allowing for better cognitive function.

Ultimately, this past year has taught me more than ever about what it takes to be better. And, I’m excited to see how those lessons can be applied in this next phase of life!

See you guys next month! let’s crush this. 🔥

Aditya Dewan

Thanks for reading! If you want more monthly updates like this, consider subscribing.

Find me on:

GitHub: https://github.com/thetechdude124

Twitter: https://twitter.com/adidewan124

LinkedIn: https://www.linkedin.com/in/aditya-dewan-7711b91b3/

Instagram: https://www.instagram.com/aditya.de_wan/